1. Introduction: Navigating the Next Wave of AI

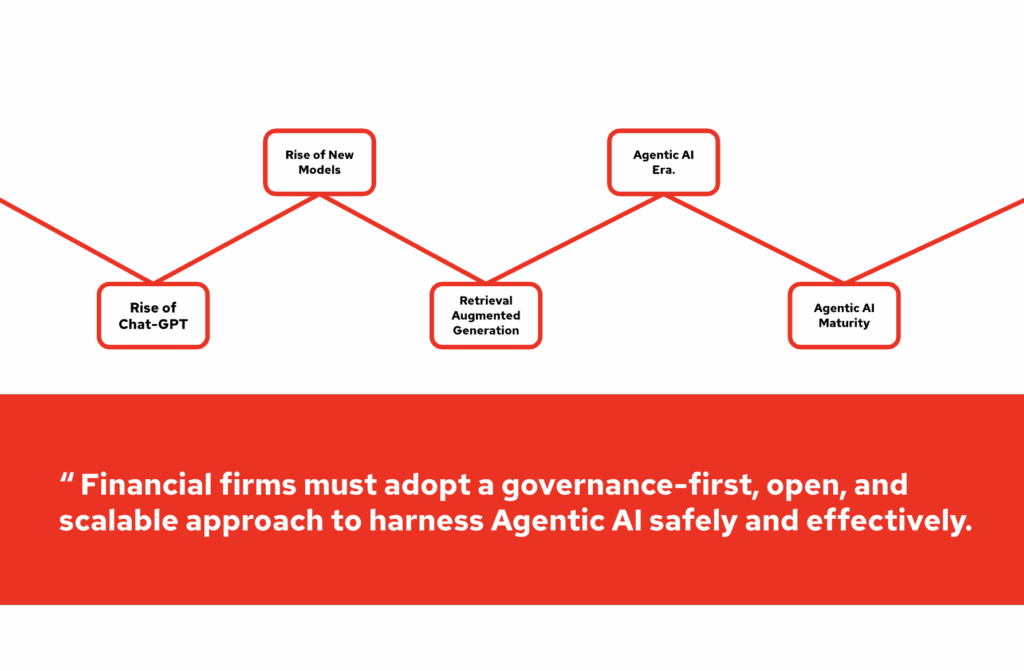

Generative AI is no longer an experiment; it is reshaping how institutions operate. The emergence of Agentic AI, where AI systems behave as autonomous agents, marks the next stage in this evolution.

This paper proposes a reference architecture for the Agentic AI adoption journey, outlining the essential components required for a scalable and secure implementation across multi-cloud environments. It details the integration of generative AI layers, inference runtimes, and governance frameworks, while providing critical guidance on choosing the right vendor to ensure a secure software supply chain and access to curated high-performance models.

2. Industry Trends: Disruption and Opportunity

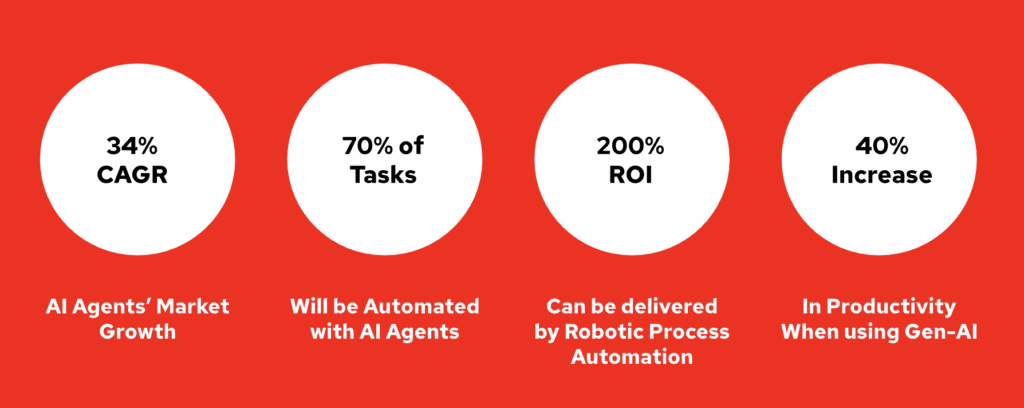

The financial sector is at a tipping point where automation and intelligence converge. Industry analysts estimate that up to 70% of knowledge work tasks can be automated with Generative AI [1], unlocking unprecedented efficiency. Early adopters of AI agents already report productivity gains of 40% [2], and the Agentic AI market is expanding at a projected 34% CAGR [3].

Past waves of automation, such as Robotic Process Automation (RPA), demonstrated an ROI of over 200% by removing manual [4], rules-based work. Agentic AI extends that success by adding reasoning, planning, and adaptability, enabling machines not just to execute but also to decide and optimize.

With frameworks like langchain, CrewAI, n8n and etc complex workflows become modular and composable. Agents can be deployed and consumed like microservices, providing developers with a streamlined experience while embedding governance, compliance, and trust. This makes Agentic AI not just a tool for efficiency, but a strategic platform for innovation across financial services.

3. The Case for AI Agents in Finance

Financial services organizations face continuous pressure to reduce costs, ensure compliance, and deliver seamless, always-on services. Agentic AI directly addresses these priorities:

Operational efficiency – Automating back-office settlement, reconciliation, and compliance reporting.

Cost reduction – Removing manual overhead in repetitive decision cycles.

Operational scalability – Scaling services dynamically to meet spikes in demand such as market volatility, seasonal transaction surges, or new product rollouts, without requiring proportional increases in staffing or infrastructure.

24/7 availability – Supporting customer queries, onboarding, and financial advice with no downtime.Decision support – Offering real-time, explainable insights for risk, fraud, and portfolio management.

4. Challenges: Complexity Meets Compliance

Adopting Agentic AI requires more than adding a model to workflows. It comes with structural and organizational challenges:

Integration complexity – Legacy systems and siloed data make connecting agentic AI difficult. Core banking and compliance platforms were not built for real-time AI orchestration.

High cost of adoption – Building and scaling agent frameworks requires significant investment in infrastructure, talent, and governance. The cost of pilots and compliance controls can be high.

Data privacy and sovereignty – Sensitive financial data must remain within jurisdictional boundaries. Cross-border operations add legal and technical complexity.

Vulnerability to attacks – AI agents introduce new attack surfaces, from prompt injection to data poisoning. Without guardrails, agents can be exploited or manipulated.

Regulation and compliance pressure – The EU AI Act, ISO standards, and banking regulators demand strict oversight. Non-compliance carries heavy penalties and reputational risk.

Bias and fairness – Historical data often contains bias. Unchecked, this can lead to unfair or discriminatory outcomes in credit, fraud, or risk workflows.

Ethical accountability – As autonomy grows, institutions must define clear lines of responsibility. Human oversight remains essential for trust and governance.

5. Reference Architecture for Agentic AI

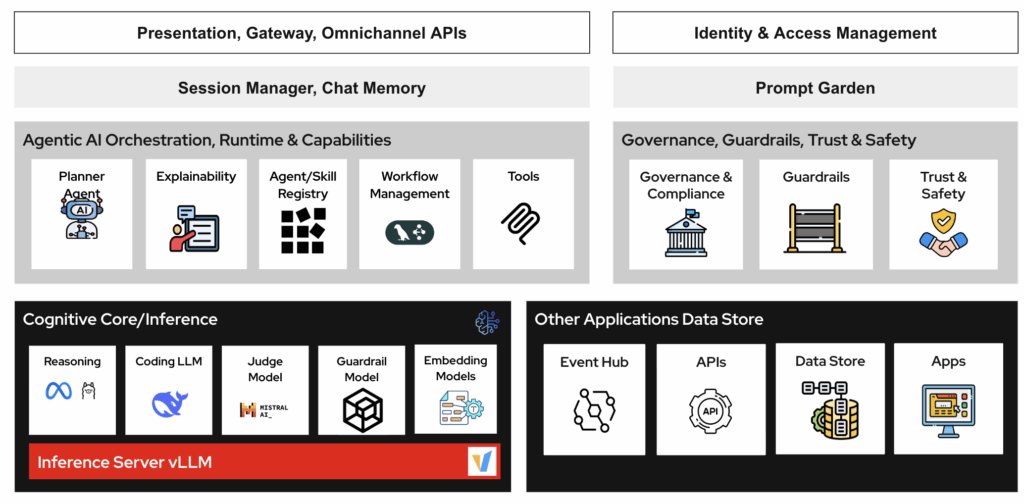

The proposed reference architecture creates a layered design that ensures trust, scalability, and developer freedom:

Multi-cloud foundation – Kubernetes provides a consistent, portable platform across private, public, and edge environments.

Generative AI platform – Llama Stack delivers a streamlined developer experience with APIs, orchestration, and safety primitives for model deployment.

Inference runtime – vLLM offers high-performance, low-latency inference to support real-time financial workloads.

Governance and guardrails – TrustyAI enforces explainability, fairness, and compliance, including red-teaming, auditability, and policy alignment.

Secure software supply chain – A secure model registry from a vendor who distributes curated and verified models with provenance tracking.Agent developer layer – Agents run as containers on OpenShift Serverless. Developers can choose frameworks such as LangChain, LangGraph, or CrewAI, while tools are connected through the Model Context Protocol (MCP) for discoverability and interoperability.

6. Context Engineering: Designing Intelligent Behavior

The reliability of an AI agent depends on how well its context is defined. Context engineering creates the foundation for trustworthy, explainable behavior and ensures that agents operate with precision, consistency, and compliance.

Prompt context – Frames the agent’s task by defining goals, constraints, and policies. This includes prompt engineering: the practice of designing and structuring instructions so that models generate accurate, relevant, and compliant outputs. Prompt engineering also helps evaluate which models perform best under specific input sequences, token sizes, and workloads ensuring quality responses in real-world deployments.

Dynamic memory – Retains session history and reasoning steps, allowing agents to carry forward prior interactions, avoid repetition, and adapt behavior to evolving situations.

Environment context – Connects agents with external systems such as APIs, databases, trading platforms, or regulatory feeds. This ensures decisions are based on live, trusted data rather than static prompts.

Guardrails – Apply policies, compliance rules, and organizational boundaries. Guardrails enforce ethical and regulatory standards while preventing agents from acting outside their intended scope.

By combining prompt engineering, dynamic memory, real-time environment integration, and guardrails, financial institutions can build agentic AI systems that deliver high-quality, explainable outcomes.

7. Agent-to-Agent Coordination

Complex financial workflows such as risk assessment, loan approvals, fraud detection, and compliance reporting often require multiple AI agents to collaborate. No single model or agent can handle every task in isolation — orchestration is essential.

Agent-to-Agent (A2A) coordination provides the structure needed for this collaboration:

Standardized messaging – Agents exchange information using consistent formats, ensuring interoperability across diverse models and tools.

Role tagging – Each agent is assigned a clear function (e.g., credit evaluator, fraud monitor, compliance checker) to avoid overlap and ensure accountability.

Shared state memory – Agents operate with continuity, sharing reasoning steps and context across workflows.

Turn-taking logic – Execution is coordinated between synchronous (e.g., settlement validation) and asynchronous (e.g., regulatory reporting) tasks.

This design mirrors the team-based collaboration in trading floors or compliance offices, but now executed at machine speed and cloud scale.

Kubernetes provides the scalable, multicloud foundation where agents run as containerized services.

n8n, llama-stack or Langchain streamlines model deployment and simplifies developer experience, making A2A orchestration as accessible as microservices.

TrustyAI adds guardrails for fairness, explainability, and compliance.

Vendors with secure software supply chain ensures models from the Red Hat Model Hub are verified, reproducible, and policy-compliant.

Frameworks like LangChain, LangGraph, and CrewAI can be deployed directly by developers, with Red Hat’s serverless operators giving them freedom of choice while maintaining enterprise governance.

8. Governance and Trust

Governance is the foundation of safe, enterprise-grade adoption of Agentic AI. A right vendor extends proven enterprise governance practices to meet emerging AI-specific obligations such as the EU AI Act and ISO/IEC 42001.

Auditability: Every decision leaves a traceable log for compliance, regulatory, and internal review.

Human-in-the-Loop (HITL): Sensitive or high-stakes workflows (e.g., credit approvals, fraud alerts) always allow human oversight and intervention.

Guardrails: Policies, role-based permissions, simulation testing, and continuous monitoring keep agents aligned with business rules and compliance.

Risk Mitigation: Phased deployment sandbox → pilot → production minimizes exposure while ensuring reliability at scale.

9. How to Choose an AI Vendor?

Agents as Microservices

- Agents run as containerized microservices on OpenShift.

- Deployable via Kubernetes or Serverless.

- Modular, scalable, observable.

- Supports any agentic framework: LangChain, LangGraph, CrewAI.

Hybrid Cloud & Data Proximity

- Agents and inference run close to customer data.

- Works across any public cloud, on-prem, or edge.

- Supports data residency, sovereignty, and compliance.

- Reduces latency for real-time financial services.

Seamless Inference with vLLM

- Integrated high-performance inference runtime.

- Low latency, efficient scaling for GenAI workloads.

Fine-Tuning & Customization

- Purpose-built tuning labs.

- Customize models for domain-specific language, workflows, and regulations.

Trust & Compliance with TrustyAI

- Guardrails for fairness, transparency, accountability.

- Monitors for bias and ensures auditable decisions.

Secure Model Supply Chain

- Huggingface delivers curated, verified, and secure models.

- Backed by Red Hat’s enterprise software supply chain protections.

“An agentic platform provides a developer-first, enterprise-ready platform for Agentic AI at scale.“

10. Conclusion and Next Steps

Agentic AI represents a profound shift in how financial services operate, combining the automation of GenAI with the resilience of enterprise platforms. The architecture outlined here ensures:

Scalability – A multi-cloud, container-native foundation.

Trust – Governance-first adoption with TrustyAI.

Choice – Developer freedom with Llama Stack and open frameworks.

Security – A verified model supply chain from the right vendor.

Executives should begin with concrete steps:

- Launch pilot projects in sandboxed environments.

- Establish governance and compliance frameworks early.

- Build AI literacy across technology and business functions.

- Adopt a platform-first strategy to innovate without compromising trust.

References

[3]https://neuron.expert/news/agentic-ai-market-size-to-hit-usd-19905-billion-by-2034/14114/en

[4]https://www.exelatech.com/blog/roi-robotic-process-automation-comprehensive-analysis

Leave a Reply